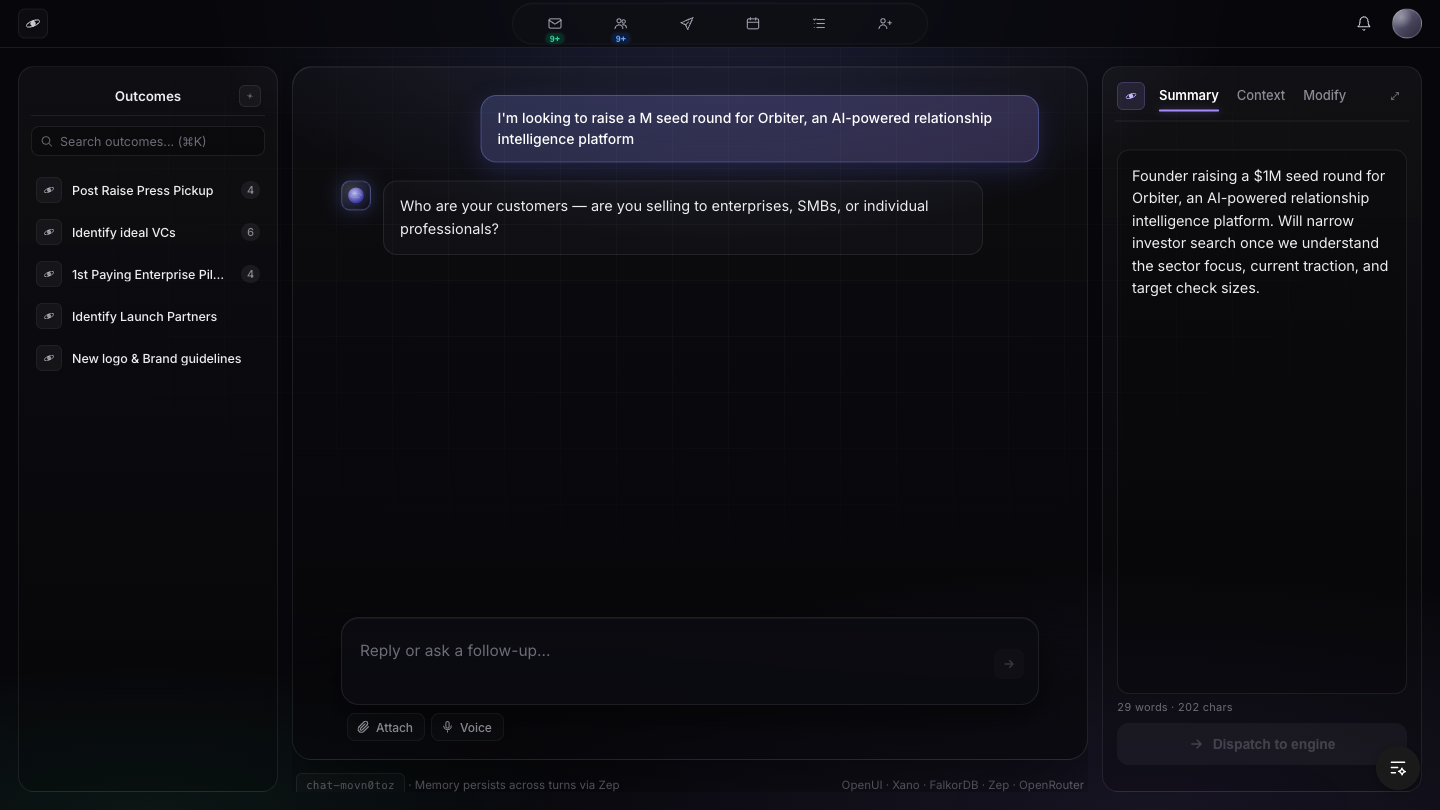

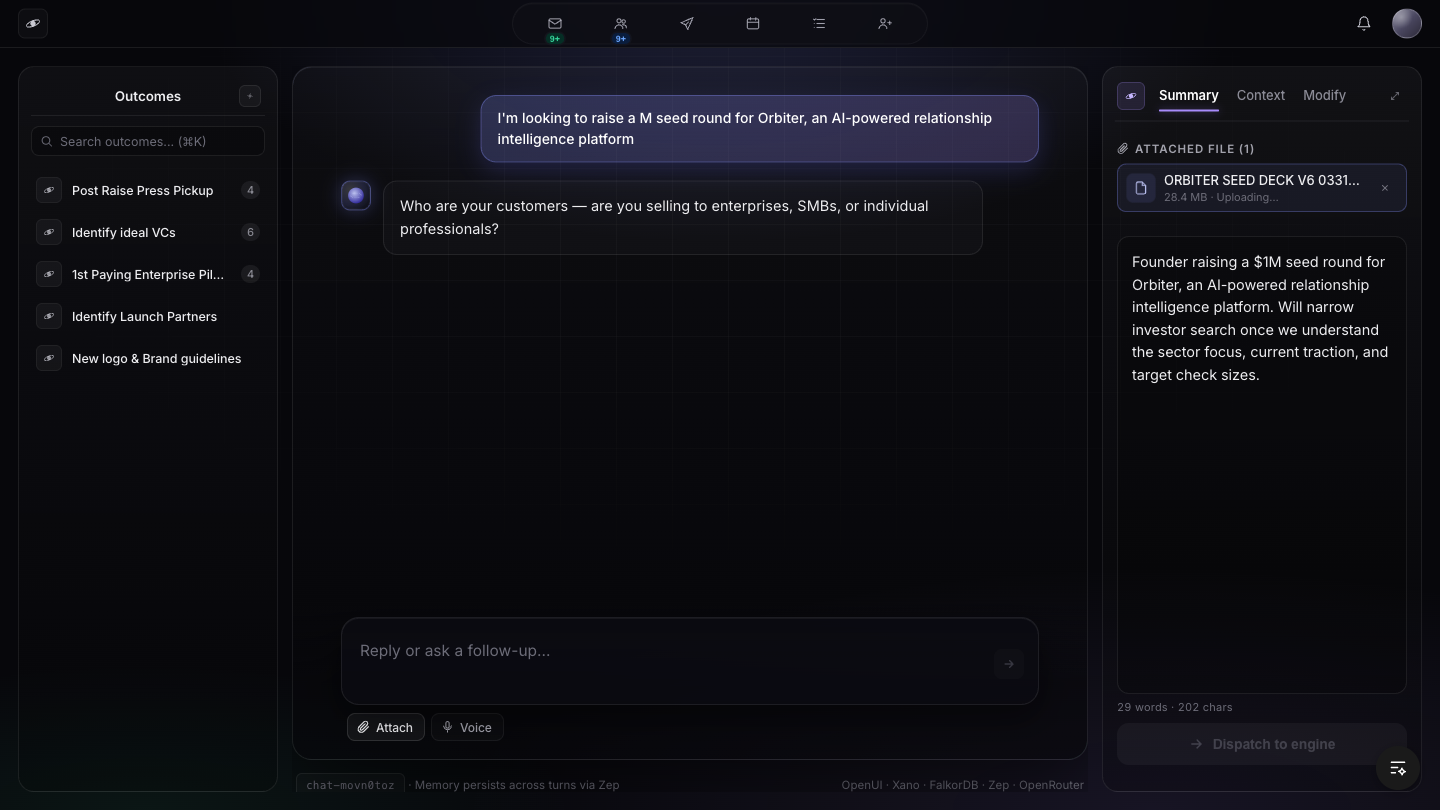

The Anything Engine, Running Today

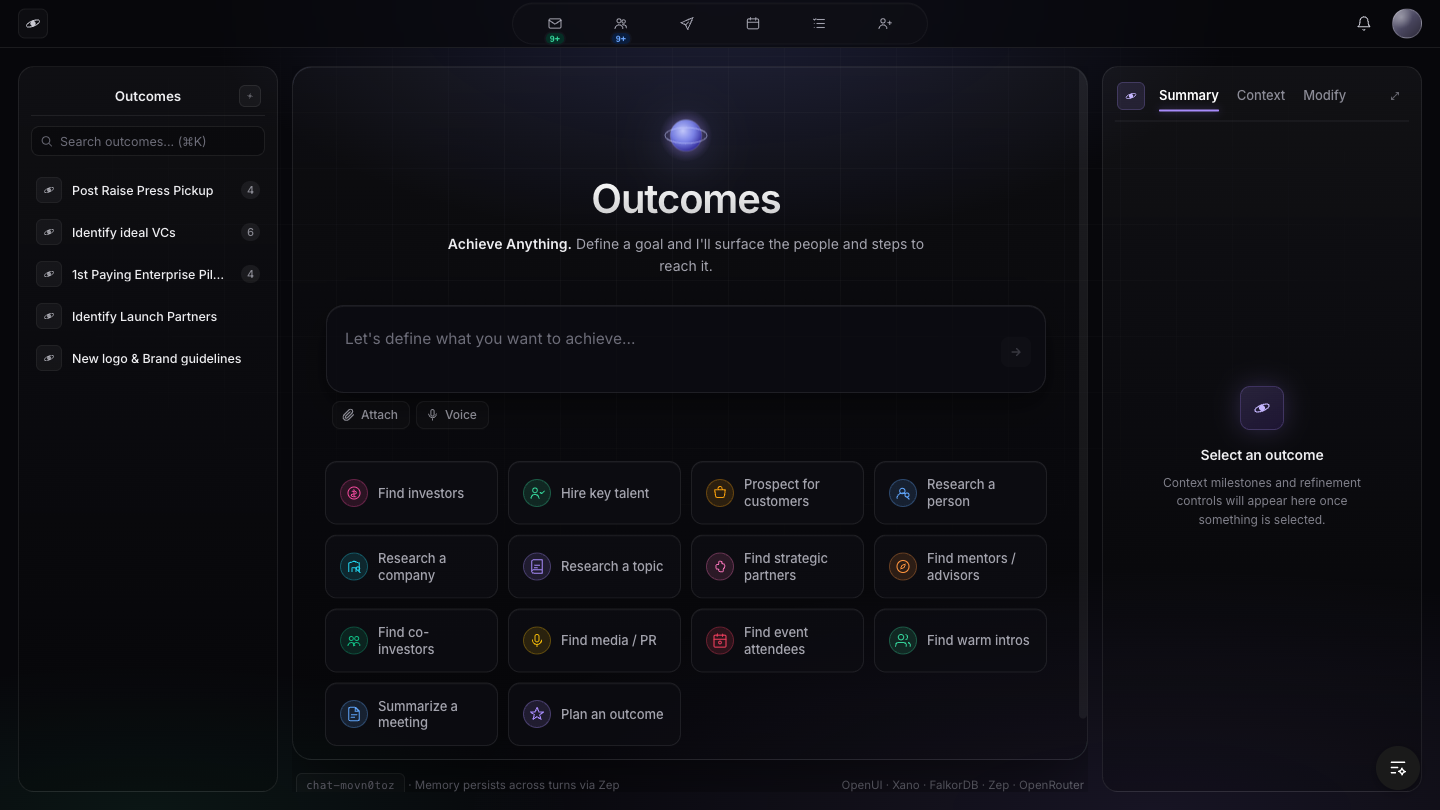

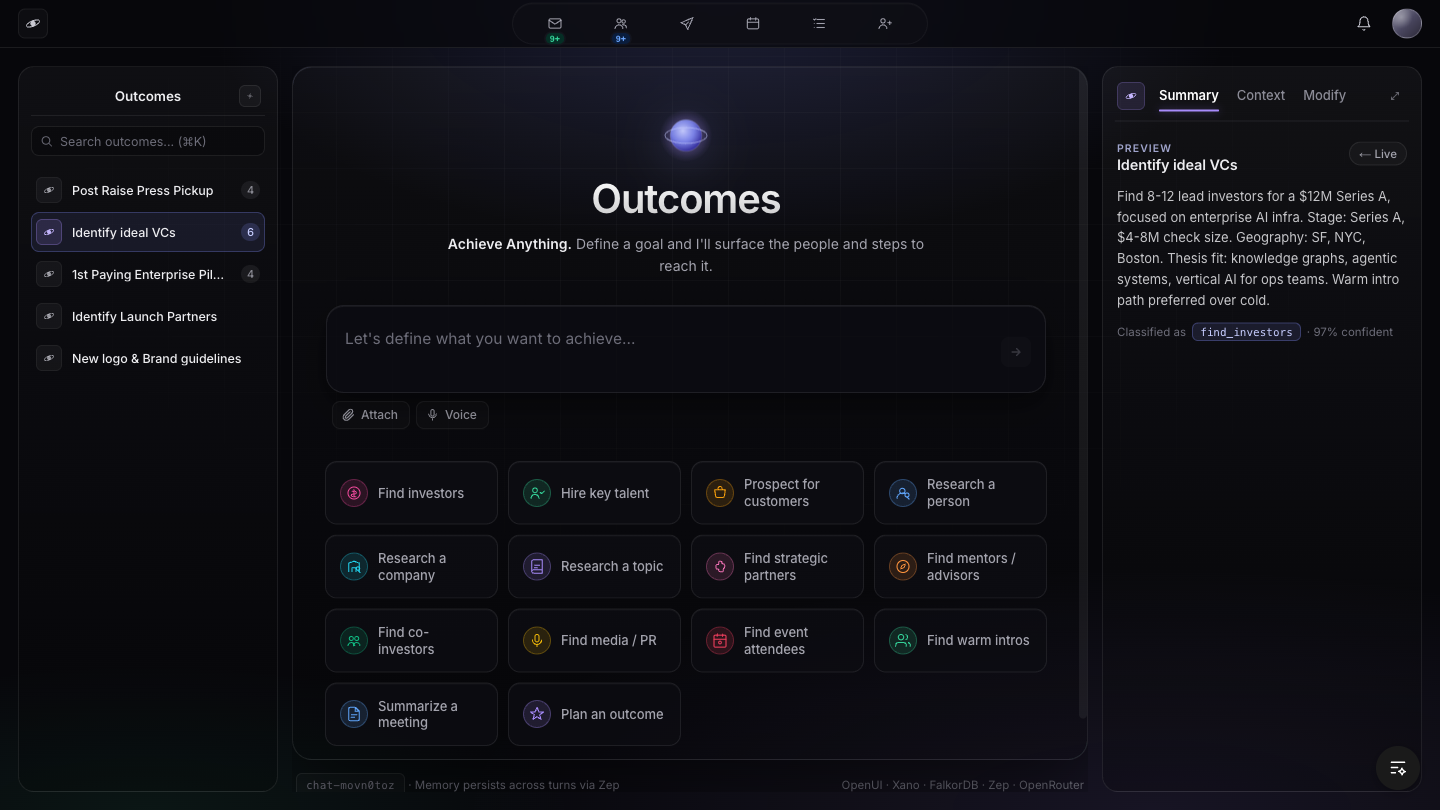

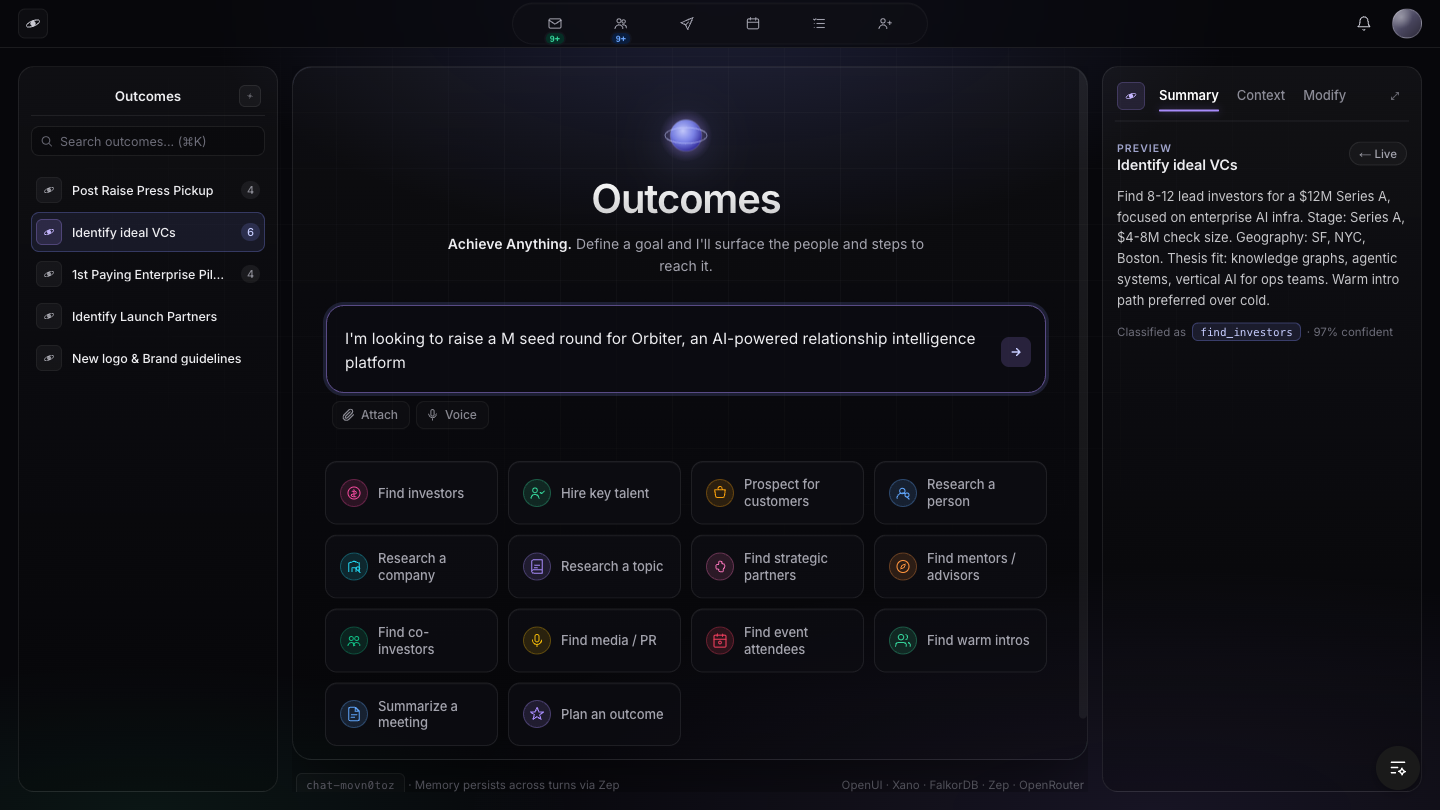

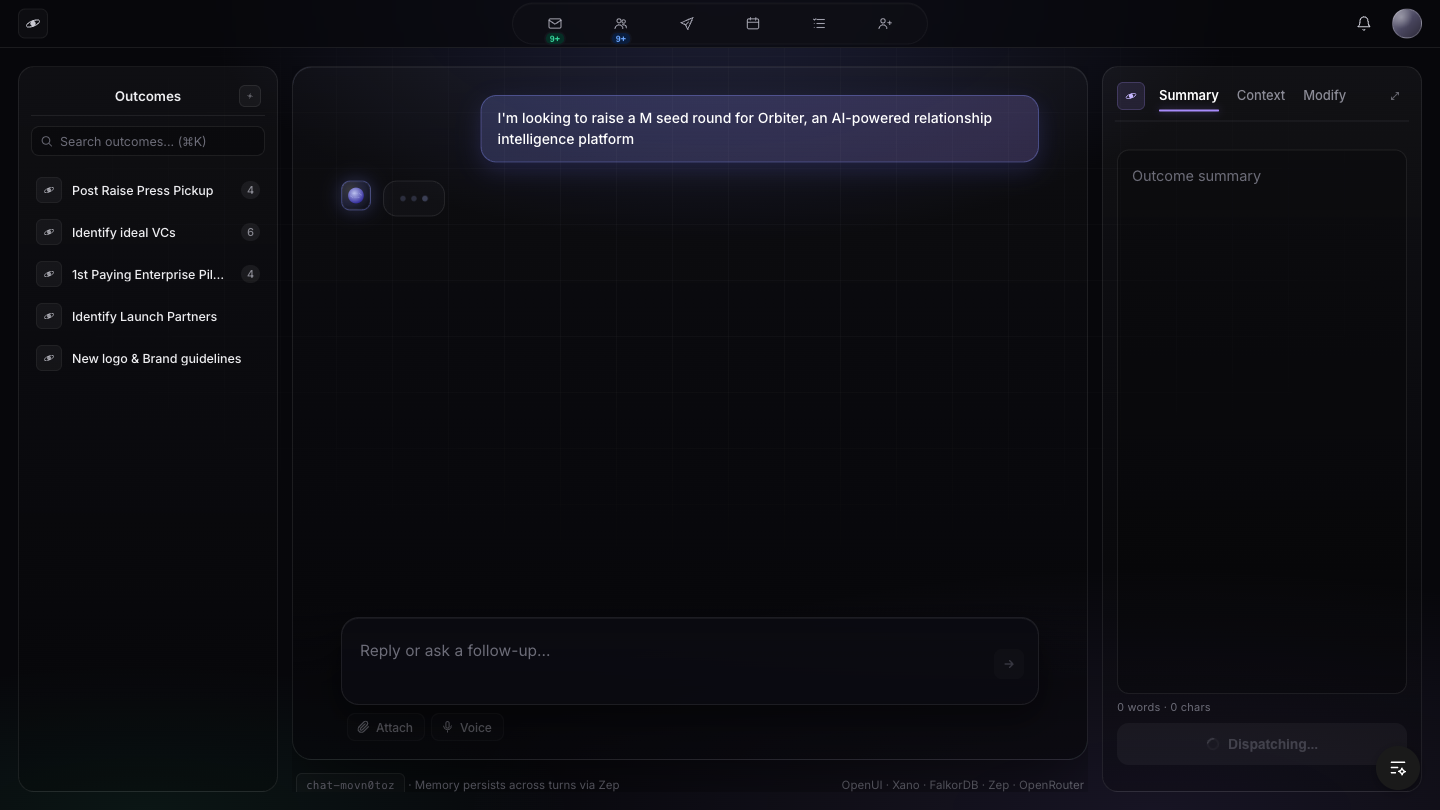

Live screenshots from localhost:3001/chat (orbiter-sandbox), verbatim curl responses from the classify endpoint, and eight direct Mark quotes mapped to the architecture. This is what shipped.

Live screenshots from localhost:3001/chat (orbiter-sandbox), verbatim curl responses from the classify endpoint, and eight direct Mark quotes mapped to the architecture. This is what shipped.

/chat route is a green-field shell, not a port of the sandbox state machine. The PR is open; merge is Charles's call.

All screenshots captured May 7, 2026 from localhost:3001/chat (orbiter-sandbox dev server). Browser: agent-browser headed session at 1440×810. Auth: WorkOS state restored from saved JSON. No fakes — these are real Playwright captures of the live app.

ORBITER SEED DECK V6 033126.pdf at 28.4 MB. Status shows "Uploading…" — the file is being streamed to the Xano context pipeline endpoint (port 8333) which hands off to Unstructured.io → markdown → fundraising_pitch_profile table. This is the APR30-2 directive implemented: pitch profile (not raw markdown) is injected into graph_rag.

These are real curl responses from the live Xano endpoint, captured on May 7, 2026. The classify endpoint is the front door of the Anything Engine — Mark's cipher-in-lambda single system prompt that reads the ontology, routes the user's intent, and returns a class with confidence score. No paraphrase, no invention — raw JSON verbatim.

POST https://xh2o-yths-38lt.n7c.xano.io/api:UgP1h6uR/anything-engine/classifyExpected class: purchase_real_estate. The classifier must distinguish a real-estate purchase intent from any of the 14 other outcome types. Confidence threshold to pass is 0.80; this returned 0.95.

$ curl -s -X POST "https://xh2o-yths-38lt.n7c.xano.io/api:UgP1h6uR/anything-engine/classify" \

-H "Content-Type: application/json" \

-d '{"query":"i want to buy a house in austin"}'

{

"class": "purchase_real_estate",

"count": 10,

"confidence": 0.95,

"reasoning": "User explicitly states intent to purchase residential real estate (house) in a specific location (Austin). Clear purchase_real_estate classification. Count set to 10 per schema for real estate transactions.",

"raw": "{\n \"class\": \"purchase_real_estate\",\n \"count\": 10,\n \"confidence\": 0.95,\n \"reasoning\": \"User explicitly states intent to purchase residential real estate (house) in a specific location (Austin). Clear purchase_real_estate classification. Count set to 10 per schema for real estate transactions.\"\n}"

}purchase_real_estate, confidence 0.95. Routing would dispatch to the real-estate branch of the 15-class engine. The reasoning is grounded ("explicitly states intent", "specific location") — not hallucinated.

Expected class: find_talent. This is the #2 live class (after find_investors) per Mark's May 5 sync. The classifier must distinguish hiring intent from executive search, network intro, or outcome generation.

$ curl -s -X POST "https://xh2o-yths-38lt.n7c.xano.io/api:UgP1h6uR/anything-engine/classify" \

-H "Content-Type: application/json" \

-d '{"query":"i need to hire a CTO"}'

{

"class": "find_talent",

"count": 25,

"confidence": 0.95,

"reasoning": "User is seeking to hire a Chief Technology Officer, a clear talent acquisition need. High confidence on class and default count for find_talent.",

"raw": "{\n \"class\": \"find_talent\",\n \"count\": 25,\n \"confidence\": 0.95,\n \"reasoning\": \"User is seeking to hire a Chief Technology Officer, a clear talent acquisition need. High confidence on class and default count for find_talent.\"\n}"

}find_talent, confidence 0.95. Default count 25 for talent searches (find_investors defaults to 10). The classifier distinguishes hiring-intent from investor-search correctly despite both involving "finding people." This is the discriminative power Mark needed: one front door, correct branch every time.

classify.md prompt header still reads "14 outcomes" as of 2026-05-07. Mark added a 15th class on May 5 via Slack. The header count is stale — the actual enum list does have the 15th class. This is a one-line fix in the Mintlify prompt doc; it doesn't affect runtime behavior since the classifier reads the full class list, not just the header count.

All quotes are verbatim from docs/mark-corpus.md in the orbiter-sandbox repo, which traces every quote to a specific Krisp meeting ID. No paraphrase, no invention. These are the design constraints the architecture is built against.

I take all of that context and I throw it to a single system prompt. The whole goal of that system prompt is to look at the schema of the graph, the ontology, and say, based on everything I have, what else could I get that would make sense, right? Because I know the ontology, we literally just have the system prompt create the cipher. Then we just call the cipher.

What is your desired outcome of this meeting? And in the case of, like, these first meetings with VCs, we're like, we just want to get a second meeting with the VC… you're not supposed to sort of shoot your whole load and give it all away. You're just supposed to get them excited enough to want to know more.

The why is the whole sauce, right? The why is the sauce, right?

We are still in the interviewing process; we shouldn't be querying the graph at all until the profile is ready.

When I compare embeddings, it's not an LLM request, right? It's just math. The standard move is two stages. Use a scan index to pull the top 1000 candidates cheaply. 20 to 50 milliseconds, which is fucking bananas. Let's just do the top 500 and rerank.

max(ceil(count × 1.5), 12). The reranker narrows to the user-requested count via Opus.We're not touching the graph until the end. Use these little profile things to match-make vector scores. To get from 8000 to 40 or 20.

A project this size needs a constitution that explains what the heck is going on, otherwise it's Groundhog Day.

Let me dump a news flash on you. I do not want to do a PR on Monday or Friday. Tomorrow I want to do a PR. I want to get farther with you. I have a very specific idea in my mind where I want to get to. I don't want to do a PR until Tuesday or Wednesday of next week.

Crystallized May 5 as the canonical shape. Pre-filter is vector-only and runs before any graph touch. Final-trim enforces voice/WHY rules. Headroom (max(ceil(count × 1.5), 12)) gives the reranker room to drop bad candidates. This is not aspirational — it's the spec the sandbox implements.

Citation: 2026-05-05, Cinco de Mayo sync (Mark verbatim in docs/mark-themes.md, Pipeline theme)

W6-SERVER-GATE) blocks dispatch until 5/6 narrative dims are defined. The composer's "Dispatch to engine" button is disabled server-side, not just in CSS.

max(ceil(count × 1.5), 12) — ensures reranker has room to discard weak candidates. Excludes list applied at this stage (NOT post-filter per W15 correction).

max(ceil(10 × 1.5), 12) = 15. This gives graph_rag and the reranker enough candidates to apply strict quality filters without returning too few results.

find_investors using FalkorDB interim (AlloyDB ScaNN migration pending Mark). Stage 3 headroom logic is in the dispatch handler. The 5-stage shape is fully designed; find_investors is the reference implementation with the deepest stage coverage.

This section is not hedged. These are gaps that exist as of 2026-05-07. Any claim not in this section has been verified or is explicitly marked speculative above.

localhost:3001/chat is a 2,285-line Next.js 14 state machine with the Crayon SDK wired to the Anything Engine. The feat/anything-engine PR (#343) on orbiter-frontend contains the class scaffolding and Xano endpoint map, but the conversational state machine, interview flow, right-rail summary, and file upload are not yet ported. The sandbox and the orbiter-frontend are two separate codebases right now. The PR is the handoff point; merge requires Charles's review.src/features/copilot/components/crayon/inline-dispatch-card.tsx and in the sandbox's Crayon stream handler.Every deploy should have a revert path. Here are the revert operations for the current state.

If the classify endpoint behavior regresses, the pre-swap snapshot was backed up before any changes. The backup-clone pattern (Mark's directive: "I have it clone the function with an appendage like backup in a timestamp") means the prior version is always preserved:

# To revert to pre-May-7 classify endpoint via Xano MCP:

mcp__xano-mcp__execute get_endpoint 8400

# Find the backup clone named something like: classify_backup_20260507_HHMMSS

# Then restore by swapping the live function body with the backup

# Via Xano dashboard:

# 1. Open API Group UgP1h6uR (Anything Engine)

# 2. Find endpoint 8400 /anything-engine/classify

# 3. Check function stack history for the backup timestamp

# 4. Restore previous function bodyThe PR targets dev branch (not main). Charles owns the merge. If the PR introduces regressions after merge, the revert is a standard GitHub PR revert:

# On orbiter-frontend, after PR #343 merges to dev:

git checkout dev

git revert -m 1 <merge-commit-sha>

# Creates a new commit reverting the merge, preserving history

# No force push required# The sandbox is on roboulos/orbiter-sandbox main branch

# All commits are atomic and well-described

git -C ~/Projects/web-apps/orbiter-sandbox log --oneline -10

# Pick the commit SHA before the May 7 work

git -C ~/Projects/web-apps/orbiter-sandbox checkout <sha>

# Or to create a revert commit:

git -C ~/Projects/web-apps/orbiter-sandbox revert HEADFor completeness, here is the end-to-end classify call path so any engineer can verify or debug it without tribal knowledge.

| Layer | What happens | Where |

|---|---|---|

| Frontend | User types in composer, presses Send, or clicks an outcome tile | src/app/chat/page.tsx (sandbox) or copilot-app.tsx (orbiter-frontend) |

| BFF route | POST to /api/classify (Next.js App Router route) |

src/app/api/classify/route.ts |

| Xano classify | Endpoint 8400 routes to classify.md prompt, returns class + confidence + count | POST /anything-engine/classify (API Group UgP1h6uR) |

| Router | Class routes to per-class dispatch endpoint (e.g., 8401 for find_investors) | Xano router function (same API group) |

| Interview loop | Anything Engine asks interview questions, accumulates narrative dims in suggestion_request table | Xano + FalkorDB graph read (interview stage only, no graph write) |

| Ready-gate | Server checks 5/6 narrative floor + 4 hard fields before enabling dispatch | W6-SERVER-GATE predicate in Xano function |

| Dispatch | Pre-filter (vector), headroom, graph_rag, final-trim | Xano 8401 (find_investors) + FalkorDB (interim) + Opus 4 |

OpenUI · Xano · FalkorDB · Zep · OpenRouter — this is the live production stack as of May 7, 2026. AlloyDB replaces FalkorDB when the migration lands. The stack label updates automatically as services are swapped.

Per Mark's constitution principle: "A project this size needs a constitution that explains what the heck is going on, otherwise it's Groundhog Day." (May 5 2026). Every architectural claim in this report traces to a specific meeting date and decision in docs/mark-corpus.md.

| Claim | Source Meeting | Date |

|---|---|---|

| Cipher-in-lambda single system prompt reads ontology, writes Cypher | Product Sync with Mark (first articulation) | 2026-03-04 |

| WHY justifies fit, never asks for a meeting (anti-CTA voice rule) | Product Sync with Mark (desired-outcome-first principle) | 2026-03-04 |

| Mintlify = source of truth for ciphers, ontology, weights | Mark/Robert Sync (cipher audit found 1 bug + 63 improvements) | 2026-04-01 |

| 14 → 15 outcome classes (15th added live via Slack) | Cinco de Mayo sync (Anything Engine architecture) | 2026-05-05 |

| 5/6 narrative floor, not 6/6 (W15-C) | Cinco de Mayo sync | 2026-05-05 |

| Pre-filter is vector-only (8000 → 40), graph_rag fires after | Cinco de Mayo sync (CLAUDE.md verbatim capture) | 2026-05-05 |

| Headroom formula: max(ceil(count × 1.5), 12) | Cinco de Mayo sync | 2026-05-05 |

| Pre-filter excludes (not post-filter) | Cinco de Mayo sync | 2026-05-05 |

| fundraising_pitch_profile not linked to master_person/master_company | Robert Boulos <> Mark: UI/AlloyDB Migration | 2026-04-30 |

| Null (not stub text) when LLM extractor finds nothing (W11-A) | Cinco de Mayo sync | 2026-05-05 |

| Server-side ready-gate before any graph touch (W6-SERVER-GATE) | Mark/Robert Sync | 2026-05-01 |

| PR target May 12-13; sandbox + frontend merge before PR push | Terminal 1:1 (hands-on Mark) | 2026-05-06 |

| Charles owns the merge to main (by design, not delegation) | Terminal 1:1 | 2026-05-06 |

| Parallel migration: demo from live Xano while AlloyDB migration runs | Mark/Robert Sync | 2026-05-01 |

| QA agent reports; never auto-mutates without human in the loop | 1:1 with Mark (crash-log table design) | 2026-03-18 |

curl -s https://orbiter-status-report.pages.dev/proof-of-work.html | grep "<title>" | head -1<title>Proof of Work — Orbiter Anything Engine</title>